SIGNAL SUMMARY

Today you will see why traditional A/B testing quietly destroys high-ticket ad budgets and what to do instead.

Inside this edition:

Why A/B testing is mathematically broken for high-ticket conversions

The real reason most funnels never become predictable

What microtesting actually isolates and validates

How to structure 12 to 15 controlled tests the right way

What this means for your cost per qualified appointment

If a single AI prompt can replace your marketing strategy, you never built a strategy.

You built a shortcut.

In 2026, shortcuts are everywhere. AI can write your hooks, assemble your landing page, and spin up ad variations in seconds. Speed is no longer rare.

Clarity is.

And if you cannot explain what actually caused the performance, you cannot repeat it. You cannot scale it.

And you definitely cannot improve it.

The Problem: AI Gave You Speed, But Took Away Your Signal

AI can generate content in an instant—like 50 hooks in 10 seconds—but it doesn’t tell you which ones resonate the most with your specific target audience.

This leads to an overload of content but a lack of actionable insights. Automated tools like Advantage+ pick your audience, headlines, creativity, and pretty much everything in between for you, leaving you disconnected from what actually works and unable to troubleshoot when results falter.

Founders now copy similar AI-generated ads and slop to broad audiences using the same generic frameworks, eroding any competitive edge.

The essential skills of testing, analyzing data, and iterating based on market feedback are being neglected; most skip straight to mass generation without understanding what drives results. You can only improve what you’ve tested. AI provides a tool—not a strategy. Without microtesting—making small changes and measuring their impact—you’re scaling untested assumptions that merely sound convincing.

The solution isn’t abandoning AI but validating its output through testing.

Microtesting is replacing traditional A/B testing for founders who want real answers about what works.

This post explains the differences between A/B testing and microtesting, when each is appropriate, why high-ticket founders are shifting toward microtests, and how to start running them today.

What Is A/B Testing? (And Why It’s Breaking in 2026)

A/B testing compares two versions of a single variable, such as a headline or an image, by splitting traffic equally between them and waiting for statistical significance.

It works and it’s been the gold standard in digital marketing for 20 years.

But here’s the problem:

A/B testing was designed for a world with cheap traffic and stable algorithms, and it presupposes a fully built-out funnel.

What changed:

Meta’s algorithm now optimizes creatives dynamically through Advantage+. It doesn’t split traffic 50/50 — it shifts budget toward what’s working in real time. This implies that the algorithm takes over your "controlled" A/B test before you gather any significant data.

Rising CPMs mean the “wait for statistical significance” approach now costs 2-5x what it did three years ago. If you’re running $50–100/day in ad spend, you’ll burn through $700–$2,100 before your A/B test reaches any conclusion—and that conclusion might still be inconclusive.

For founders with high-ticket offers ($3K–$50K+), the math gets worse.

Your audience is smaller. Your conversion events are rarer. Statistical significance becomes nearly impossible without massive spending.

A/B testing isn’t dead. But for most founders, it’s the wrong tool.

What Is Microtesting?

Microtesting is a rapid ad validation methodology that isolates and tests individual creative elements—hooks, offers, audiences, and formats—in short, controlled bursts designed to surface signal fast rather than wait for statistical significance.

Instead of testing “Ad A vs. Ad B” and waiting two weeks, microtesting tests the components that make ads work, one at a time, in 48-hour sprints.

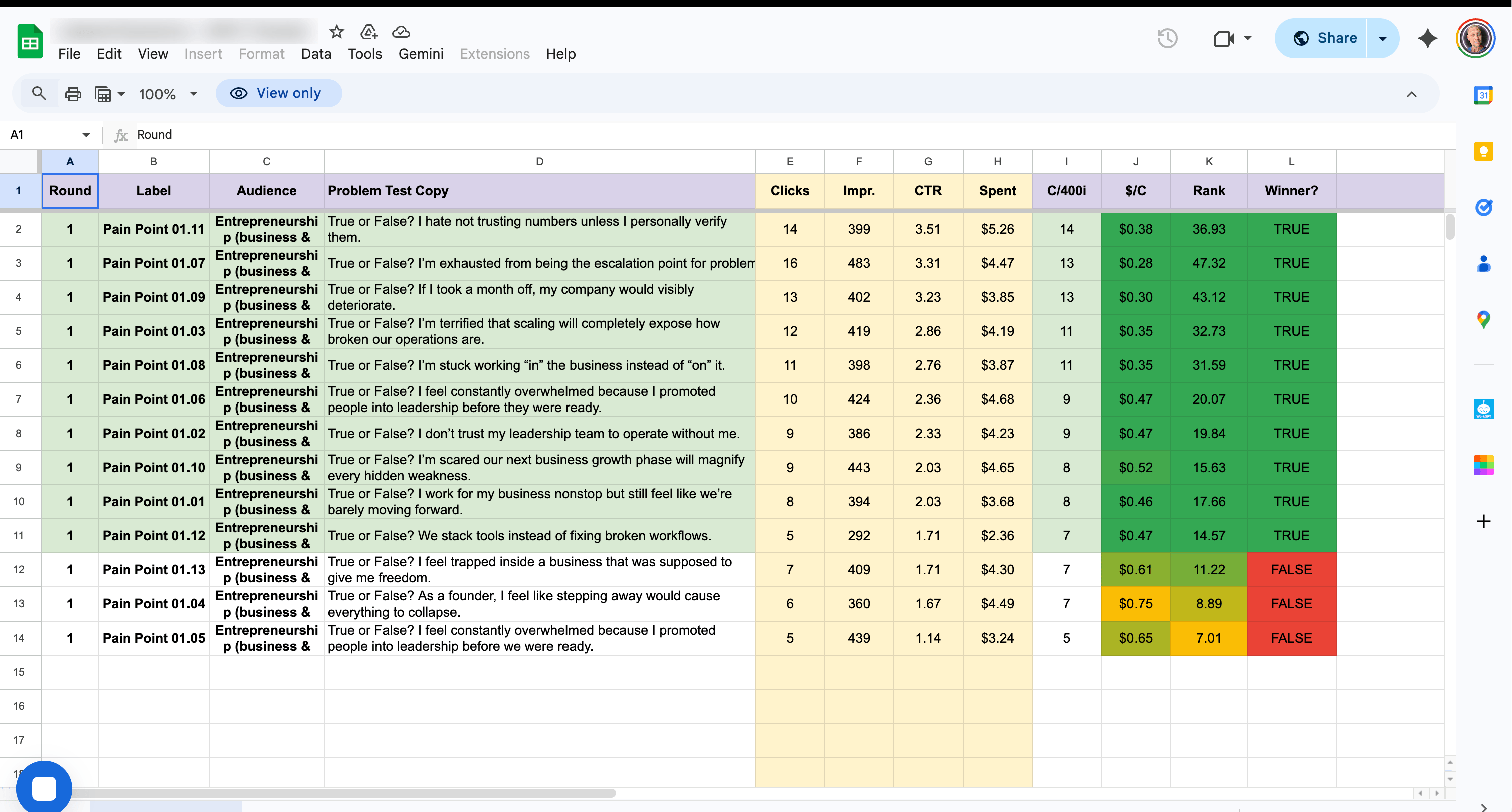

Pain points & Problem Statements test

Here’s how it works in practice:

Step 1: You launch 10 to 15 ad variations, each isolating one variable (e.g., four different hooks with the same body copy and creative). Budget: $50–100 total.

Step 2: After 24–48 hours, you analyze early performance signals—CTR (click-through rate), thumb-stop ratio, and CPC (cost per click)—to identify clear winners and losers.

Step 3: You combine winning elements into your next round of tests, where you then isolate a different variable, all without building landing pages or funnels.

The whole process costs $20–50 per round and takes 48 hours. Compare that to $2,000+ and 14 days for a standard A/B test.

Microtesting vs A/B Testing: The Real Differences

Here’s the head-to-head comparison that matters:

The fundamental difference: A/B testing tells you which ad won. Microtesting tells you why it won—which component drove performance—so you can replicate that insight across every future campaign.

Why Microtesting Works Better for High-Ticket Founders

If you’re selling $5K+ services or products, your audience is small and your conversions are expensive.

This creates three problems that A/B testing can’t solve:

Problem 1: Sample size. You need ~100 conversions per variant for statistical significance in A/B testing. If your cost per lead is $50, that’s $100,000 for one test. Microtesting uses leading indicators (CTR, CPC, hook rate) that generate signals with far less data.

Problem 2: Speed. High-ticket sales cycles are long. You can’t afford to spend three weeks testing your ad before you even start generating leads. Microtesting gives you answers before the end of the week.

Problem 3: Compounding learning. A/B testing gives you one binary answer—A or B. Microtesting gives you a library of validated components you can mix, match, and stack. Each test makes every future test better.

When A/B Testing Still Makes Sense

To be clear: A/B testing isn’t obsolete.

It still works when you already have a working funnel, ideally with high traffic volume (1000+ daily visitors), rather than validating a new offer; when you need statistically defensible data for stakeholders or investors; or when you’re testing major structural changes like pricing models.

If you’re a VC-backed SaaS company spending $50K/month on ads with a dedicated growth team, A/B testing is still a powerful tool in your stack.

But if you’re a founder or small team trying to validate an offer, find your winning message, or launch a new campaign—microtesting gets you there 10x faster at a fraction of the cost.

The Real Advantage of Microtesting

The biggest advantage of microtesting is not speed. It is understanding.

One-click AI campaigns can generate ads instantly. But they are trained to model what already works. They remix other creators. They optimize patterns. They do not build first-principle clarity about your specific audience.

Microtesting forces you to answer the uncomfortable foundational questions first.

Who exactly is your ICP?

What emotional pain actually makes them react?

What language triggers identification?

Instead of copying surface-level patterns, you extract real data about how your audience thinks and what they respond to. That knowledge compounds. It becomes repeatable. It becomes scalable.

Once you comprehend the rules, you can break them, but not before.

AI is powerful. We use it every day. But it should amplify validated insight, not replace it. If you sell high-ticket services, guessing is expensive. Microtesting replaces guesswork with measurable learning before you scale.

If you want to validate your offer and messaging with real data instead of assumptions, start there.

SEE YOU NEXT WEEK!

Use our free signals

Use our free signals and get the best results. If you want any kind of help with micro-testing ads, book a consultation below

Thank you, talk soon!