If you had told me just two years ago that I would end up programming an app, I would not have believed it, and here I am, vibe-coding. 🤓

What we've been building

For the past few weeks, Claude Code—yes, an AI—and I have been building the app that runs every sprint you've read about in this newsletter.

It's called 1Signal, and it's the engine behind every microtest result I've shared.

Here's what happened this week alone:

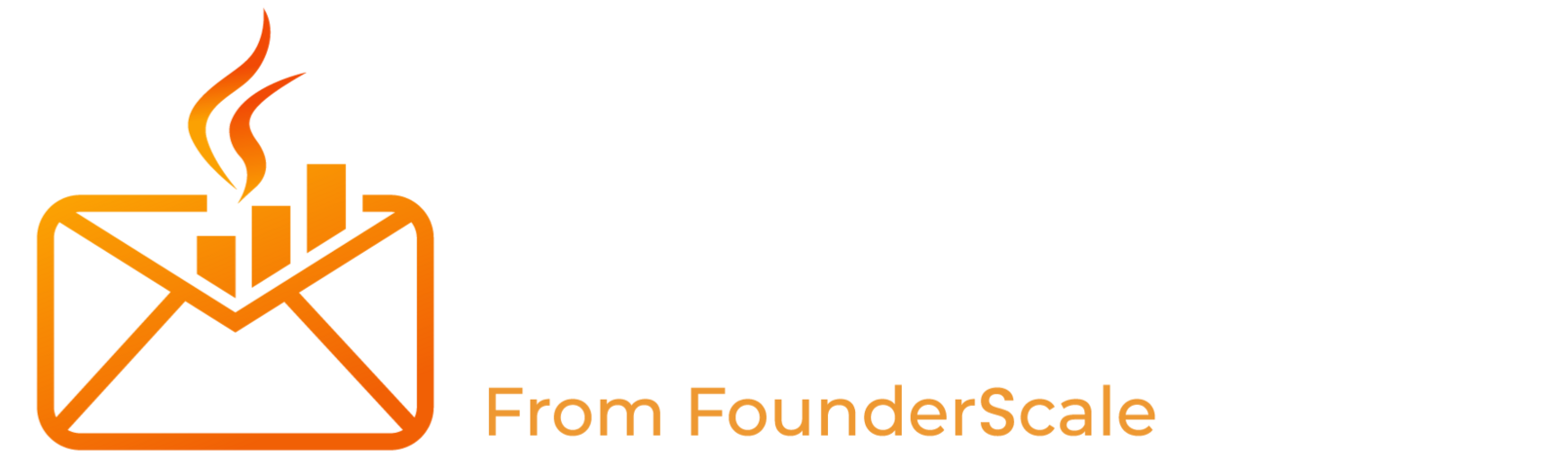

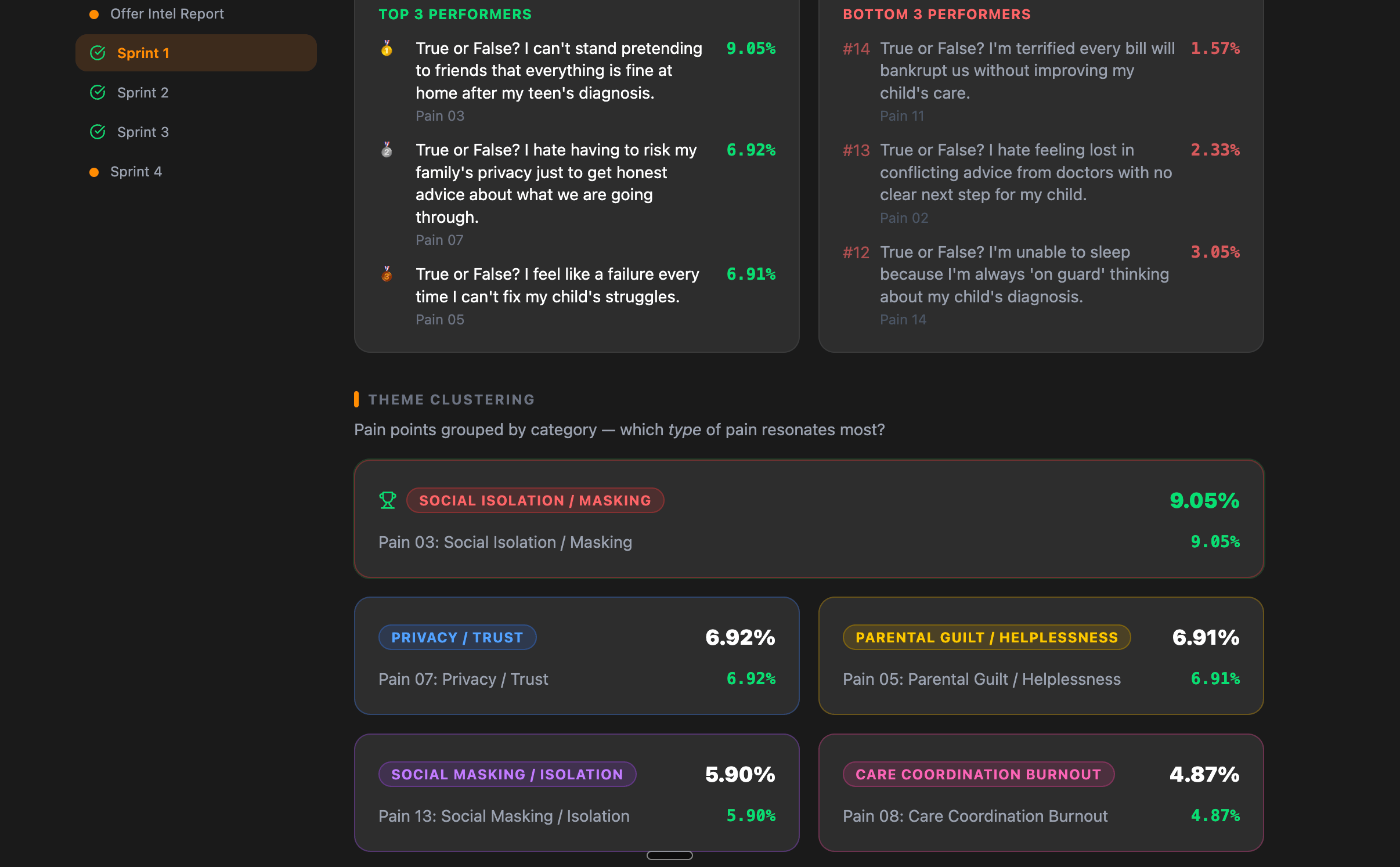

Sprint 1 analysis got a complete overhaul. When a pain point sprint finishes, you don't just get a spreadsheet of numbers.

You get winner clustering, loser analysis, confidence scoring, and a full interpretation of why your audience clicked what they clicked.

A real client's Sprint 1 results—parsed, ranked, and explained.

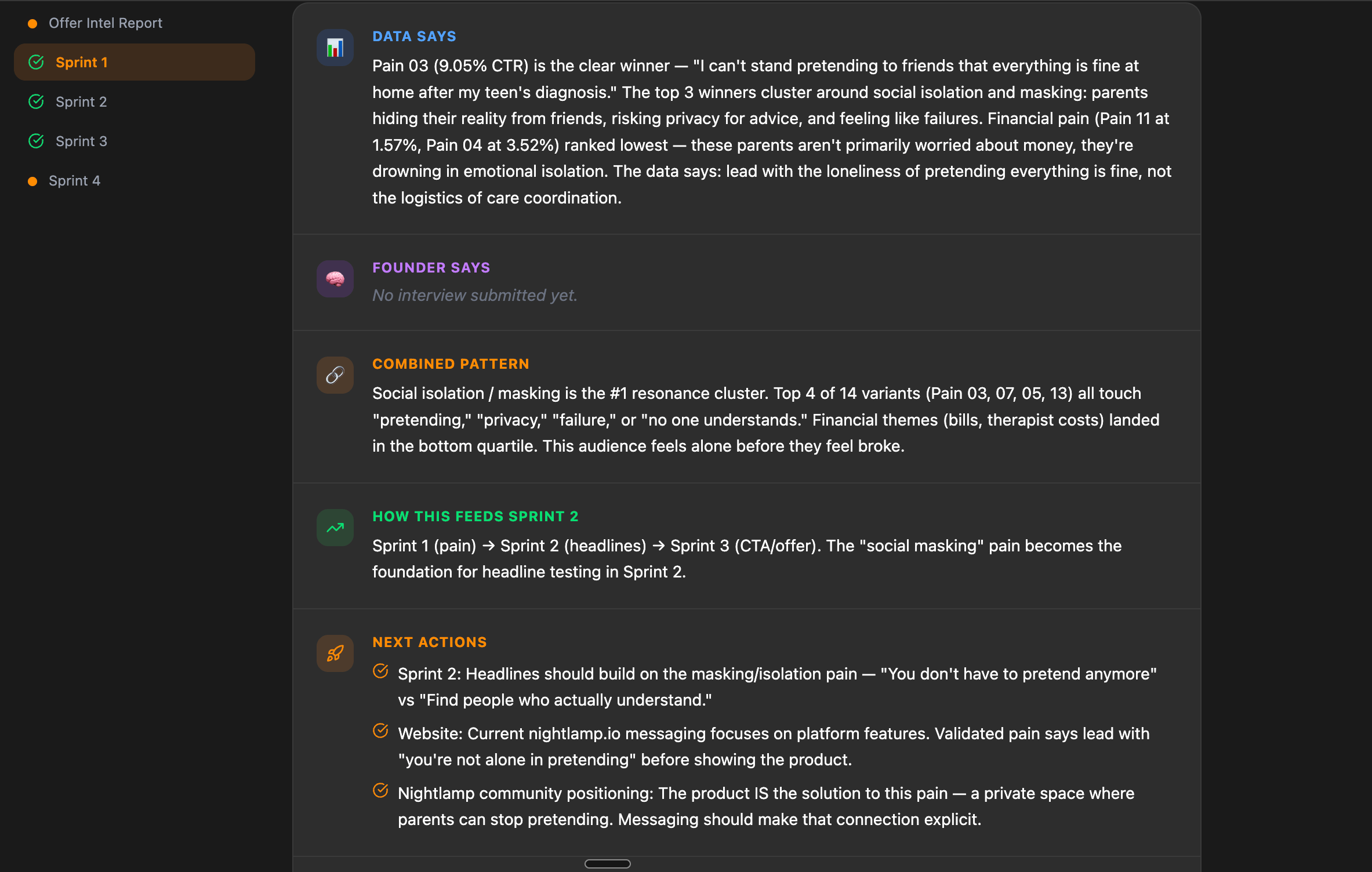

Sprint 2 and Sprint 3 got the same treatment. Headline testing (Sprint 2) now shows you exactly how each headline performed against your current website headline as a baseline.

You can see which language your audience responds to—and which falls flat.

Sprint 3 (offer types) breaks down which CTA format actually pulls action: free audit vs. strategy call vs. downloadable guide. Side-by-side. With data.

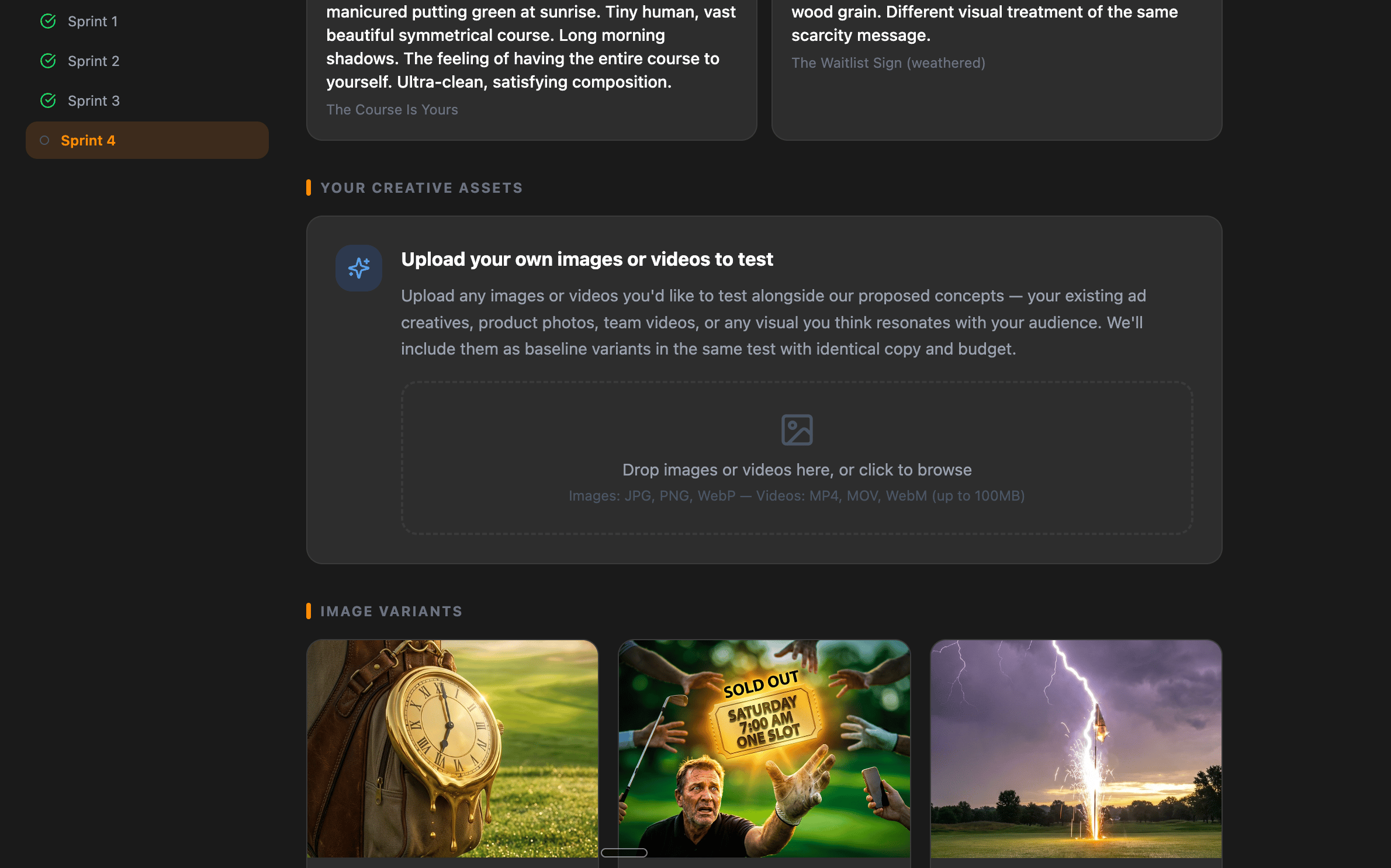

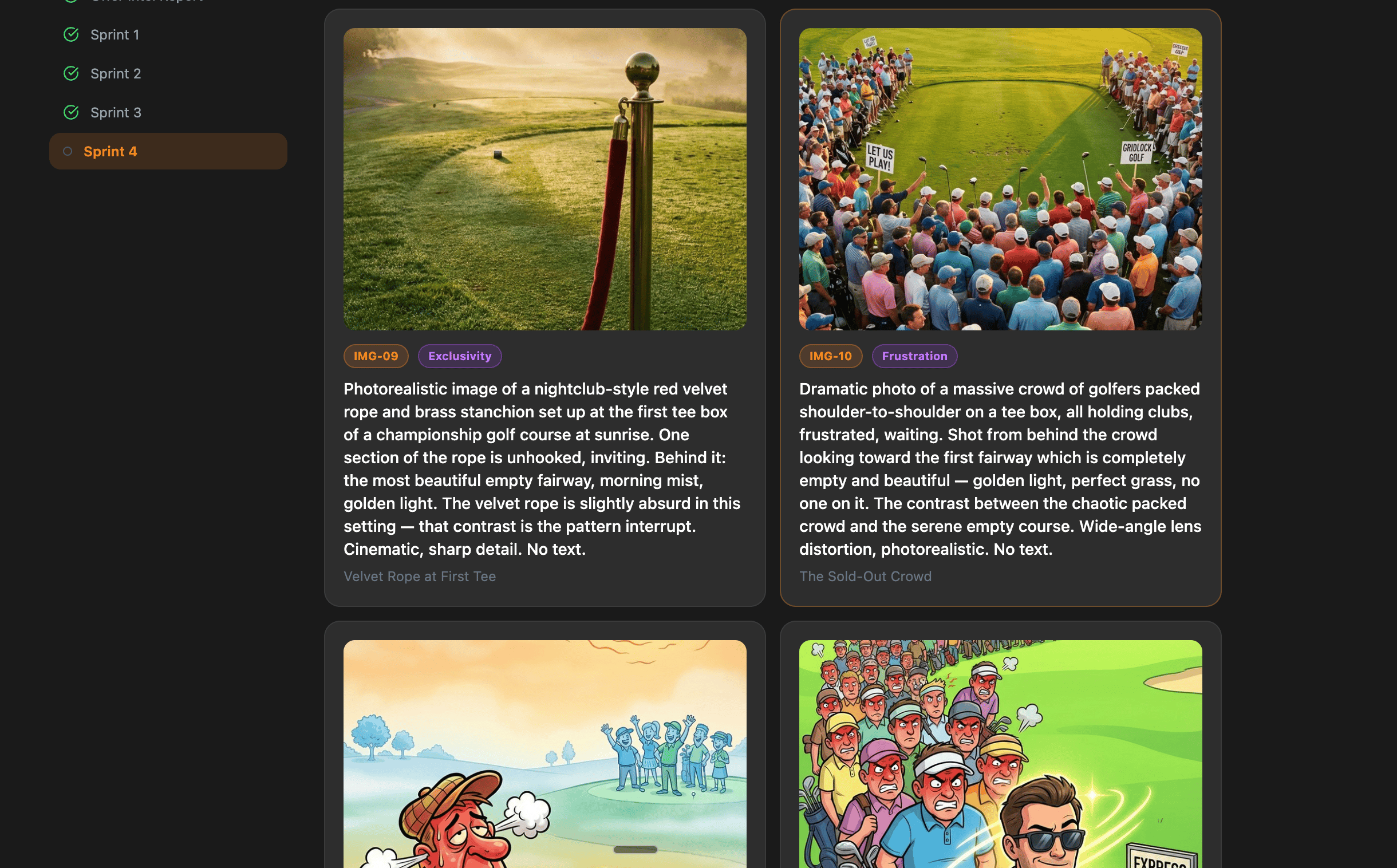

And then we shipped Sprint 4 image creative testing with full upload support.

You can now upload your images—founder photos, data visualizations, lifestyle shots, and surrealist concepts—and the system tests each one in isolation against the pain point, headline, and offer that already won in Sprints 1-3.

Every variable locked. Only the visual changes.

We built the upload infrastructure, the analysis views, the approval workflows, and the side-by-side comparison tool.

Where we are right now

1Signal is live and running in beta, invite only.

For now, only my one-on-one, done-for-you service clients have access, meaning it's not public yet. No launch date.

If you want to see the full app—the dashboards, the sprint flows, the analysis pages then start

Roman